10.2: Naive Bayes and Model Construction#

Learning Objectives

Purpose

Introduce students to the manual construction of a supervised classification

model using Naïve Bayes, emphasizing how data preparation, feature selection,

and training decisions affect model behavior and evaluation.

Students Learn

- Define a fixed feature space by removing invariant fingerprint bits and preserving the feature mask.

- Create, save, and reload stratified training and test splits along with supporting metadata.

- Diagnose class imbalance in a training dataset and apply downsampling as a model-specific preprocessing step.

- Build a probabilistic Naïve Bayes classifier from training data.

- Generate and interpret confusion matrices for classification-based inference.

- Generate and interpret ROC curves for probability-based model evaluation.

Core Activities

- Organize model inputs, splits, and metadata into a reproducible directory structure.

- Construct a Naïve Bayes classifier step-by-step using saved training data.

- Evaluate model performance using both class predictions and predicted probabilities.

Prior Knowledge

- Complete Module 10.1: Data Preparation and Feature Engineering

- Complete Appendix A10.2: Bayes' Theorem: From Inference to Models

1. Preparation for model building#

We are building supervised learning models to predict the biological activity of small molecules. Each molecule is represented by a set of MACCS keys, and each molecule has an associated activity label: 1 for active and 0 for inactive.

For a single compound, the model can be written as:

where:

(X) is a feature vector containing the MACCS keys for that compound,

(y) is a scalar prediction (0 or 1),

(f) is the learned model that maps features to an activity prediction.

For an entire dataset of compounds, this generalizes to:

where:

\((\mathbf{X})\) is a feature matrix of shape (n_samples, n_features),

\((\mathbf{y})\) is a label vector of length n_samples.

We use uppercase X to indicate that the input data is multiple descriptors

In practice, the symbol X is used generically to denote the input features. Depending on context, X may refer to:

a single feature vector for one compound.

A feature matrix containing feature vectors for many compounds

Explanation

The use of uppercase X and lowercase y follows a long-standing convention in mathematics, statistics, and machine learning.

-

X(uppercase) typically represents a matrix of features when working with a dataset- Shape: (n_samples, n_features)

- Each row corresponds to one compound (observation)

- Each column corresponds to one descriptor or fingerprint bit

-

y(lowercase) represents a vector of target values (labels)- Shape: (n_samples,)

- Each element is the activity associated with one compound

In linear-algebra terms:

Xis a 2-dimensional object (a matrix)yis a 1-dimensional object (a vector)

This naming convention reflects the canonical supervised-learning equation:

\( y = f(\mathbf{X}) \)

where:

- the function

frepresents a learned model - the model takes a matrix of input features (X)

- and produces a vector of outputs (y)

In short:

- Uppercase

X→ feature matrix - Lowercase

y→ target vector

This convention is not enforced by Python, but it is widely adopted and helps communicate the structure of the data at a glance.

1.1 Loading the data into X and y.#

import pandas as pd

# read fingerprints with activities csv file into pandas dataframe

df_data = pd.read_csv("data/AID743139/features/AID743139_MACCS_activities_noSalt_20260205.csv") # Change to your file path if you need to load the dataframe

# we now have a dataframe with CIDS, activities and maccs keys

print(df_data.shape)

df_data.head(3)

(6793, 170)

| cid | activity | clean_smiles | maccs000 | maccs001 | maccs002 | maccs003 | maccs004 | maccs005 | maccs006 | ... | maccs157 | maccs158 | maccs159 | maccs160 | maccs161 | maccs162 | maccs163 | maccs164 | maccs165 | maccs166 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 12850184 | 0 | O=C(CO)[C@@H](O)[C@H](O)[C@@H](O)C(=O)[O-] | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 |

| 1 | 89753 | 0 | O=C([O-])[C@H](O)[C@@H](O)[C@H](O)[C@H](O)CO | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 |

| 2 | 9403 | 0 | C[C@]12CC[C@@H]3c4ccc(O)cc4CC[C@H]3[C@@H]1CC[C... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 1 | 0 | 1 | 1 | 0 | 1 | 1 | 1 | 1 | 0 |

3 rows × 170 columns

We will put the MACCS Keys into a variable called X_MACCS, the feature Matrix.

We will put the activity values into a variable called y, the label vector.

X_MACCS = df_data.iloc[:,3:] # this is dropping cid, activity, and clean_smiles and creating a new variable for maccs data

y = df_data['activity'].values # note, df_data is a pd dataframe, but .values makes it a np array

# Print head of feature matrix

X_MACCS.head(3)

| maccs000 | maccs001 | maccs002 | maccs003 | maccs004 | maccs005 | maccs006 | maccs007 | maccs008 | maccs009 | ... | maccs157 | maccs158 | maccs159 | maccs160 | maccs161 | maccs162 | maccs163 | maccs164 | maccs165 | maccs166 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 |

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 1 | 0 | 1 | 0 | 0 | 0 | 0 | 1 | 0 | 0 |

| 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 1 | 0 | 1 | 1 | 0 | 1 | 1 | 1 | 1 | 0 |

3 rows × 167 columns

import numpy as np

print(y[:5])# prints first 5 values of the label vector

print(np.unique(y)) # prints all unique values of label vector

[0 0 0 0 0]

[0 1]

# Write the code to print length of label vector (y) and number of active compounds (hint, they are zeros and ones, so you can sum them)

print(len(y))

print(y.sum())

6793

743

1.2 Feature Selection: Remove zero-variance features#

Some features in X are not helpful in distinguishing actives from inactives, because they are set ON for all compounds or OFF for all compounds. Such features need to be removed because they would consume more computational resources without improving the model.

We will use the VarianceThreshold method of sklearn to identify which features have a variance of zero or very low. Variance in data represents how spread out the values of a feature are. The threshold parameter is set to 0.0 by default, meaning only features with zero variance (constant values across all samples, 100% identical values) are removed.

What if a feature has ≥99% identical values?

Explanation

Let’s say a feature is1 in 99.5% of rows and 0 in the remaining 0.5%. It does not have zero variance, but the variance is very low.

If you want to remove such near-constant features, you need to set threshold accordingly. In this case the variance is calculated as:

where p is probability of the feature being 1

So to remove this feature, your threshold must be greater than 0.004975, for example:

VarianceThreshold(threshold=0.005)

It might be interesting to see how our models change, or time calculating the model changes if we do some prefiltering by adjusting the threshold.

from sklearn.feature_selection import VarianceThreshold

X_MACCS.shape #- Before removal

(6793, 167)

import numpy as np

from sklearn.feature_selection import VarianceThreshold

# Apply variance threshold

sel = VarianceThreshold(threshold=0.0)

X_MACCS_filtered = sel.fit_transform(X_MACCS)

# Boolean mask of retained features

mask = sel.get_support()

# Human-readable feature names

kept_features = X_MACCS.columns[mask]

removed_features = X_MACCS.columns[~mask]

print("Features removed:", list(removed_features))

print("Filtered feature matrix shape:", X_MACCS_filtered.shape)

# ---- FEATURE METADATA (generated here) ----

feature_metadata = {

"fingerprint": "MACCS",

"original_bits": X_MACCS.shape[1],

"selected_bits": int(mask.sum()),

"removed_bits": int((~mask).sum()),

"selection_method": "VarianceThreshold",

"threshold": 0.0,

"removed_feature_names": list(removed_features),

"source_file": "AID743139_MACCS_activites_noSalt_20260104_v1.csv",

"notes": "Invariant MACCS bits removed prior to modeling"

}

Features removed: ['maccs000', 'maccs001', 'maccs002', 'maccs004', 'maccs166']

Filtered feature matrix shape: (6793, 162)

A MACCS fingerprint has 166 bit positions but the RDKit MACCS fingerprint has 167. This position has zeros for all molecules and is removed regardless of the threshold, can you think of why this position is set to zero?

Answer

Themaccs000 position is always zero because it is a

dummy bit added by RDKit for bookkeeping purposes.

The canonical MACCS fingerprint defines 166 chemically meaningful keys numbered 1–166 (not 167).

By including a zero-valued bit at position 0, RDKit allows the bit index to match the MACCS key number directly (e.g., bit 1 → MACCS key 1), avoiding off-by-one confusion when labeling features.

Because this dummy bit carries no chemical information and has zero variance across all molecules, it is removed during feature preprocessing.1.3 Feature Selection: Freezing the Feature Definition (Mask and Metadata)#

Up to this point, we have generated MACCS fingerprints for all compounds and examined which fingerprint bits vary across the dataset. When we remove invariant bits using a variance filter, we are no longer just manipulating data—we are defining the feature space that every downstream model will use. From this moment forward, a “feature” has a specific meaning: it is one of the MACCS bits that survived this filtering step. This process is known as feature selection: deciding which descriptors are informative enough to be included in the model and which should be excluded.

Because this feature selection step is learned from the data, it must be treated as part of the scientific record. Any model trained on these data—whether Naive Bayes, Decision Trees, or future methods—must use exactly the same feature definition in order to be valid and comparable. To ensure reproducibility and avoid hidden assumptions, we explicitly save the feature mask (a Boolean array indicating which MACCS bits were kept or removed) as a numpy file, along with descriptive metadata explaining how and why the selection was performed as a json file.

By freezing the feature definition here (meaning it will not change for this dataset), we create a clear boundary between data preparation and modeling. All subsequent notebooks and models will load and reuse this saved feature definition rather than recomputing it, guaranteeing that results remain consistent even across kernel restarts, new environments, or alternative machine-learning methods.

from pathlib import Path

import json

import numpy as np

from cinf26pk.core import make_filename, make_fixed_filename

#PROJECT_ROOT = Path.cwd() # current working directory

FEATURES = Path("data/AID743139/features")

FEATURES.mkdir(parents=True, exist_ok=True)

# Save variance mask

mask_fname = make_filename(

prefix="AID743139_MACCS_variance_mask",

ext="npy"

)

np.save(FEATURES / mask_fname, mask)

# Save metadata JSON

meta_fname = make_filename(

prefix="AID743139_MACCS_feature_metadata",

ext="json"

)

with open(FEATURES / meta_fname, "w") as f:

json.dump(feature_metadata, f, indent=2)

print(f"[Saved] Variance mask → {FEATURES/mask_fname}")

print(f"[Saved] Feature metadata → {FEATURES/meta_fname}")

[Saved] Variance mask → data/AID743139/features/AID743139_MACCS_variance_mask_20260205_v1.npy

[Saved] Feature metadata → data/AID743139/features/AID743139_MACCS_feature_metadata_20260205_v1.json

Explain the role of the following three files in the features directory. These three files together define the feature representation and labeling scheme used for model training and evaluation. They must be treated as a matched set.

AID743139_MACCS_activites_noSalt_.csv

This CSV file contains the core machine-learning dataset. Each row corresponds to a single PubChem compound (CID) and includes:- The PubChem Compound ID (CID)

- A binary activity label (0 = inactive, 1 = active) derived from the assay outcomes

- A MACCS fingerprint vector, which will be used to construct the feature matrix (X) for model training

AID743139_MACCS_feature_metadata_.json

This JSON file records the feature-engineering decisions applied to the MACCS fingerprints. It serves as documentation and provenance for how the feature matrix was constructed.Typical contents include:

- Which MACCS bit positions were removed (e.g., invariant bits such as MACCS000)

- The reason for removal (e.g., zero variance across all compounds)

- The original fingerprint length and the final feature count

- Any parameters or assumptions used during fingerprint processing

- Reproduce the feature matrix exactly

- Understand why certain bits are missing

- Apply the same preprocessing rules to future datasets or external test compounds

AID743139_MACCS_variance_mask_.npy

This NumPy file contains the Boolean variance mask used to filter the MACCS fingerprints. The mask is a 1-D array with one entry per original MACCS bit:

- True → keep this bit

- False → drop this bit

- Transform raw MACCS fingerprints into the final feature matrix

- Ensure consistency between training data, test data, and future predictions

1.4 Reload Data#

Where we are in the Supervised Learning Pipeline

In this module, we are continuing a supervised learning pipeline that began with data preparation. We first downloaded BioAssay data from PubChem and stored the unmodified exports in the /raw directory. We then curated these data to remove invalid records and standardize chemical representations, saving the cleaned results in /curated. From this curated dataset, we generated molecular fingerprints (MACCS keys) and applied feature-level filtering to remove invariant bits, producing a refined feature representation. The resulting fingerprint dataset, along with the feature-selection mask and metadata documenting how the features were constructed, was saved in the /features directory as non-volatile .csv and .npy files. In the current notebook, we reload these saved feature artifacts and use them to define train/test splits, which establish the experimental framework for the modeling activities that follow. These splits will support multiple supervised learning models—such as Naive Bayes, Decision Trees, Random Forests, and k-Nearest Neighbors—while ensuring that all models are trained and evaluated on a consistent molecular representation.

Note: In this notebook, the term pipeline refers to a conceptual workflow, a sequence of data transformations and modeling steps applied consistently. In later notebooks, this workflow will be formalized into reusable and scalable pipeline objects.

1.4.1 Regenerate X_MACCS#

X_MACCS is the original unfiltered feature matrix

This is the “ground truth representation”

# Step 1 Load source feature data

import pandas as pd

df_data = pd.read_csv(

"data/AID743139/features/AID743139_MACCS_activities_noSalt_20260205.csv"

)# adjust code to your file

# Separate labels

y = df_data["activity"].values

# Reconstruct full MACCS feature matrix

X_MACCS = df_data.iloc[:, 3:] # CID, activity, clean_smiles dropped

print(X_MACCS.shape)

(6793, 167)

1.4.2 Load and apply saved variance mask#

Mask is 1D

length must equal

X_MACCS.shape[1]

import numpy as np

# Load saved variance mask

mask = np.load(

"data/AID743139/features/AID743139_MACCS_variance_mask_20260205_v1.npy"

)# adjust code to your file path

print("Mask shape:", mask.shape)

print(mask.sum(), "features retained")

# Safety check: ensure mask matches feature matrix

assert X_MACCS.shape[1] == mask.shape[0], (

"Incompatible feature mask: "

"mask length does not match number of MACCS features. "

"Check that the CSV and mask were generated from the same dataset."

)

Mask shape: (167,)

162 features retained

1.4.3 Reconstruct the filtered feature matrix X_MACCS_filtered#

In this step, we reconstruct the filtered MACCS feature matrix by reapplying a previously learned transformation. No feature selection is performed here; instead, we reuse the saved variance mask to ensure that the exact same feature definition is recovered after a kernel restart. The reconstruction is performed using a Pandas DataFrame so that feature alignment and consistency can be verified. Once feature semantics are finalized, the filtered feature matrix is explicitly converted to a NumPy array in preparation for downstream modeling, where only numeric operations are required.

X_MACCS_filtered = X_MACCS.loc[:, mask]

print(X_MACCS_filtered.shape)

# Decide representation for modeling

X_MACCS_filtered = X_MACCS_filtered.to_numpy() # <-- optional but explicit

(6793, 162)

1.5 Train-Test-Split (a 9:1 ratio)#

Now that we’ve prepared the dataset, the next step is to divide it into two parts: one for training the model and one for testing it. This is important because we want to evaluate how well the model performs on unseen data, and not just the data it was trained on.

This is typically done by splitting the dataset into two subsets using a specified ratio. Common splits include 80:20 or 70:30, where the larger portion is used for training and the smaller for testing. When the dataset is small or the model requires more examples to learn effectively, a 90:10 split can be helpful.

In the next code section, we will split the data so that 90% goes into the training set and 10% into the test set. The training set is used to build the model, while the test set is used to evaluate how well the model generalizes to new data. Sklearn’s train_test_split creates several NumPy arrays at once by applying the same randomized split to aligned data. We include the DataFrame index so we can recover which chemical compounds ended up in the training and test sets.

It is important that this train–test split is performed before any model-building decisions, such as class balancing, downsampling, or reweighting. These operations will later be applied only to the training data, never to the test data. By splitting the dataset at this stage and saving the resulting arrays, we preserve an untouched test set that represents the original data distribution. This ensures that model evaluation reflects true generalization performance rather than artifacts introduced during training.

The following table summarizes the key parameters used in the upcoming train_test_split() call. Review these options before running the code, as they determine how the training and test sets are constructed.

Parameter |

Value Used |

Purpose in This Workflow |

|---|---|---|

|

feature matrix |

The input feature matrix containing MACCS fingerprints after variance filtering. |

|

label vector |

Binary activity labels (0 = inactive, 1 = active) aligned row-by-row with |

|

row identifiers |

Preserves the original row identity so compounds can be traced after splitting. |

|

|

Holds out 10% of the data for testing, leaving 90% for training. |

|

|

Randomizes the order of samples before splitting to avoid ordering bias. |

|

|

Fixes the random number generator seed so the split is reproducible. |

|

|

Ensures the training and test sets retain the same active/inactive class ratio as the original dataset. |

In particular, the stratify=y argument is critical for classification problems with class imbalance. It guarantees that both the training and test sets reflect the original class distribution, preventing accidental bias in model evaluation caused by uneven splits.

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test, idx_train, idx_test = train_test_split(

X_MACCS_filtered,

y,

df_data.index, # preserve row identity across the split

shuffle=True,

random_state=3100, # make the split reproducible

stratify=y, # preserve class balance

test_size=0.1 # 10% of data held out for testing

)

print("Training set shape:", X_train.shape, y_train.shape)

print("where there are", X_train.shape[0], "samples, and", X_train.shape[1], "features")

print("and", y_train.shape[0], "activities associated with the training set.")

print()

print("Test set shape:", X_test.shape, y_test.shape)

print("where there are", X_test.shape[0], "samples, and", X_test.shape[1], "features")

print("and", y_test.shape[0], "activities associated with the test set.")

print()

print("Number of active compounds in training set:", y_train.sum())

print("Number of active compounds in test set:", y_test.sum())

Training set shape: (6113, 162) (6113,)

where there are 6113 samples, and 162 features

and 6113 activities associated with the training set.

Test set shape: (680, 162) (680,)

where there are 680 samples, and 162 features

and 680 activities associated with the test set.

Number of active compounds in training set: 669

Number of active compounds in test set: 74

What does this line of code do?

X_train, X_test, y_train, y_test, idx_train, idx_test = train_test_split(

X_MACCS,

y,

df_data.index, # preserve row identity across the split

shuffle=True,

random_state=3100, # make the split reproducible

stratify=y, # preserve class balance

test_size=0.1 # 10% of data held out for testing

))

Explanation

This line calls scikit-learn’s train_test_split() function to partition the data into training and test sets in a way that is safe for machine learning.

Before this line:

X_MACCS_filtered is a 2-D numerical array (or array-like object) containing molecular features (rows = compounds, columns = MACCS fingerprint bits).

y is a 1-D numerical array containing the corresponding activity labels (one value per compound).

What train_test_split() does:

Splits the rows of X and y together so that each compound’s feature vector stays paired with its activity label.

Creates four NumPy arrays

X_train – feature matrix used to train the model

X_test – feature matrix held back for evaluation

y_train – activity labels for the training set

y_test – activity labels for the test set

idx_train - row numbers of training samples

idx_test - row numbers of test samples

Uses NumPy-style array slicing internally. Which is why the outputs expose NumPy attributes like .shape.

Shuffles the data before splitting (shuffle=True). This prevents ordering artifacts (e.g., all actives grouped together).

Preserves class balance (stratify=y). The fraction of active vs. inactive compounds is maintained in both the training and test sets.

Ensures reproducibility (random_state=3100). The same split will be generated every time this code is run.

Reserves 10% of the data for testing (test_size=0.1) The remaining 90% is used for model training.

We removed CID, Index and SMILES and so there is no way to go back to which chemical is which, and idx_train/test does that

In short, this single line converts your feature table and labels into four NumPy arrays structured exactly the way scikit-learn expects, establishing the data layout used throughout machine-learning workflows. |

1.5.1 Save the test/train split as np arrays#

from pathlib import Path

import json

import numpy as np

# ------------------------------------------------------------

# Define split configuration

# ------------------------------------------------------------

SPLIT_NAME = "90_10"

SPLITS = Path("data/AID743139/splits") / SPLIT_NAME

SPLITS.mkdir(parents=True, exist_ok=True)

# Optional subfolder for arrays (keeps things tidy)

ARRAYS = SPLITS / "arrays"

ARRAYS.mkdir(exist_ok=True)

# ------------------------------------------------------------

# Save NumPy arrays

# ------------------------------------------------------------

np.save(ARRAYS / "X_train.npy", X_train)

np.save(ARRAYS / "X_test.npy", X_test)

np.save(ARRAYS / "y_train.npy", y_train)

np.save(ARRAYS / "y_test.npy", y_test)

# Save index mappings (critical for traceability)

np.save(SPLITS / "train_idx.npy", idx_train)

np.save(SPLITS / "test_idx.npy", idx_test)

# ------------------------------------------------------------

# Save CID traceability

# ------------------------------------------------------------

df_data.loc[idx_train, ["cid"]].to_csv(

SPLITS / "train_cids.csv",

index=False

)

df_data.loc[idx_test, ["cid"]].to_csv(

SPLITS / "test_cids.csv",

index=False

)

# ------------------------------------------------------------

# Save split metadata

# ------------------------------------------------------------

split_metadata = {

"assay": "AID743139",

"split_name": SPLIT_NAME,

"description": "Stratified 90/10 train/test split",

"test_fraction": 0.10,

"train_fraction": 0.90,

"stratified": True,

"random_state": 3100,

"labels": "Active vs Inactive",

"features": "MACCS (variance-filtered)",

"source_feature_file": "AID743139_MACCS_activities_noSalt_20260205.csv"

}

with open(SPLITS / "split_metadata.json", "w") as f:

json.dump(split_metadata, f, indent=2)

print(f"[Saved] Train/test split → {SPLITS}")

[Saved] Train/test split → data/AID743139/splits/90_10

The train/test split produces several files that serve different roles in the machine-learning workflow. Some are used directly for model training, while others preserve traceability, reproducibility, and interpretability. The table below summarizes what was saved and why.

Purpose |

Files |

Why They Exist |

|---|---|---|

Model inputs |

|

Numerical feature matrices and label vectors used directly for model training and evaluation. |

Operational split definition |

|

Exact row indices used to construct the split, allowing it to be verified, reconstructed, or reused. |

Chemical identity traceability |

|

Maps rows in the NumPy arrays back to compound identifiers for inspection, reporting, and auditing. |

Provenance & metadata |

|

Documents how the split was created, including parameters and source files, as part of the scientific record. |

Not all of these files are used immediately in this notebook. Some exist to support later model evaluation, interpretation, or reproducibility, and will be revisited in subsequent sections and notebooks.

Explain the role of the following files in the splits/90_10 directory. Be sure to open the files! You can open the *.csv and *.json files directly from the jupyter lab file browser. You can open the *.npy files by executing the following in a code cell (and altering file name as appropriate).

SPLIT_NAME = "90_10"

SPLITS = Path(“data/AID743139/splits”) / SPLIT_NAME

SPLITS.mkdir(parents=True, exist_ok=True)

ARRAYS = SPLITS / “arrays”

ARRAYS.mkdir(exist_ok=True)

test_idx = np.load(SPLITS / “arrays/X_test.npy”)

test_idx

split_metadata.json

Documents how the split was performed- Train/test ratio (90/10)

- Random seed used

- Apply stratification (maintain same active/inactive ratio of test and train sets as was in the original set

- Source feature file name

train_cids.csv and test_cids.csv

Identify which compounds (CIDs) ended up in test and traing set. These files are for:- Inspection

- Reporting

- Debugging

train_idx.npy and test_idx.npy

The train and test index files define the data split operationally by storing the exact row positions used to slice the feature matrix (X) and label vector (y). Applying these indices guarantees that every model, even when developed in different notebooks or with different algorithms, is trained and evaluated on the exact same compounds. Chemical identifiers such as CIDs are stored separately for interpretation and auditing, but the model itself only ever sees rows of numerical features selected by these indices.

Once the split has been applied and saved as training and test arrays, the index files are no longer needed for routine model training or evaluation. They are retained as permanent artifacts to support traceability, auditing, and reproducibility, allowing the split to be verified, reconstructed, or reused if the feature representation is regenerated in the future.

X_train.npy and y_train.npy

These files contain the training data used to build machine-learning models. X_train.npy is a NumPy array representing the feature matrix, where each row corresponds to a compound and each column corresponds to a MACCS fingerprint bit retained after variance filtering. y_train.npy is the label vector, containing the binary activity labels (0 = inactive, 1 = active) for the same compounds, in the same row order.

These arrays are the direct inputs to model fitting (e.g., model.fit(X_train, y_train)). The training data may later be balanced, weighted, or resampled, depending on the modeling strategy, but these files preserve the original, stratified train split exactly as defined. To open and view the content of one of these file you can use the following command

import numpy as np

y_train = np.load("y_train.npy")

y_train[:5]

(Note: you have to set the path to the correct file location)

X_test.npy and y_test.npy

These files contain the held-out test data used to evaluate model performance. X_test.npy is the feature matrix for the test compounds, and y_test.npy contains their corresponding activity labels, aligned row-by-row with the feature matrix.

The test arrays are never modified (no balancing or resampling) and are used only for prediction and evaluation (e.g., confusion matrices, ROC curves, precision/recall). Because they were created using a stratified split, they reflect the natural class distribution of the dataset and provide an unbiased assessment of model generalization.

# Write code here to view a *.npy files in the arrays folder (see above hints)

import numpy as np

y_train = np.load(SPLITS/"arrays/y_train.npy")

y_train[:5]

array([0, 0, 1, 0, 0])

Looking Ahead: Preparing Data for Model Building

In the next section, we will reload these saved training and test arrays and begin preparing the training data for model construction. One common challenge in biological activity datasets is class imbalance, where the number of inactive compounds greatly exceeds the number of active compounds. When left unaddressed, this imbalance can cause a model to favor the dominant (majority) class rather than learning meaningful patterns associated with activity.

We will explore strategies for addressing this issue using only the training data, while keeping the test set unchanged so that model evaluation remains unbiased.

2. Preparing the Training Data Model Construction#

In this section, we focus exclusively on the training data and the preprocessing steps that are permitted during model construction. Unlike earlier steps, where we defined the feature space and created a fixed train–test split, the operations introduced here may intentionally modify the training data to help the model learn more effectively.

A key principle governs all steps in this section: the test set is never altered. Any transformations, resampling, or adjustments are applied only to the training data, ensuring that model evaluation remains unbiased and reflects performance on unseen data.

2.1 Reload the Saved Training and Test Arrays#

If you are continuing directly from Section 1 without restarting the kernel, the training and test arrays may already be in memory. However, it is important that you know how to reload these arrays from disk in case the kernel has been restarted or you are returning to the notebook at a later time. For that reason, we begin this section by explicitly loading the saved NumPy arrays. If the arrays are already in memory, you may treat this step as a demonstration of how the data can be recovered when needed.

from pathlib import Path

import numpy as np

# Define arrays directory (relative to notebook)

SPLIT_ROOT = Path("data/AID743139/splits/90_10/arrays")

# Load arrays into active memory

X_train = np.load(SPLIT_ROOT / "X_train.npy")

y_train = np.load(SPLIT_ROOT / "y_train.npy")

X_test = np.load(SPLIT_ROOT / "X_test.npy")

y_test = np.load(SPLIT_ROOT / "y_test.npy")

X_train.shape, y_train.shape

((6113, 162), (6113,))

The following code loads two objects into memory. Explain what these objects are, where they come from, and how they are used in the machine-learning workflow.

X_train = np.load(SPLIT_ROOT / "X_train.npy")

y_train = np.load(SPLIT_ROOT / "y_train.npy")Answer

The files X_train.npy and y_train.npy store NumPy arrays

on disk. These files are persistent, meaning they remain available even

after the kernel is restarted or the notebook is closed.

When np.load() is called, the data in these files are read from disk

and loaded into active memory as the variables X_train and

y_train. At this point, they are ordinary NumPy arrays

(numpy.ndarray) that can be passed directly to machine-learning

algorithms.

Although one representation exists on disk (nonvolatile) and the other exists in memory (volatile), they are structurally identical. Once loaded, the model cannot distinguish whether a NumPy array was created by computation or loaded from a file.

2.2 Examine class imbalance#

2.2.1 What is class imbalance and why it matters#

Before training a classification model, it is important to examine the distribution of class labels in the training set. In many biological datasets, the number of inactive compounds far exceeds the number of active compounds. This situation is known as class imbalance.

When class imbalance is present, a model may appear to perform well overall while failing to correctly identify the minority class. For example, a model trained on highly imbalanced data may learn to predict the majority class (typically inactives) most of the time, resulting in misleading accuracy and poor detection of the minority class (typically active compounds). By quantifying the class distribution early, we can assess the severity of imbalance and decide whether additional steps are needed to support effective model learning.

Strategies

Many classification problems involve imbalanced datasets, where one class occurs much more frequently than another.

If untreated, imbalance can cause models to:

Favor the majority class

Misclassify rare but important samples

Produce misleading accuracy metrics

Common strategies

There are three broad approaches to handling class imbalance:

1. Downsampling (Under-sampling)

Randomly remove samples from the majority class

Simple and effective

Risk: loss of potentially useful information

2. Oversampling

Increase the size of the minority class

Can be done by duplication or synthetic methods (e.g., SMOTE)

Risk: overfitting to replicated or artificial samples

3. Cost-sensitive learning

Penalize misclassification of the minority class more heavily

Often implemented via class weights

Keeps all data but changes the learning objective

Key takeaway

Handling class imbalance is not about maximizing accuracy; it is about shaping the decision boundary so that the assignment of class labels y to feature vectors X is driven by meaningful feature patterns, not by class frequency alone.

2.2.2 Measuring Class Imbalance in the Training Set#

To understand the severity of class imbalance in this dataset, we examine the number of inactive and active compounds in the training set and compute their ratio.

print("# inactives in training set: ", len(y_train) - y_train.sum())

print("# actives in training set: ", y_train.sum())

ratio = (len(y_train) - y_train.sum())/y_train.sum()

print("the ratio of inactive to active in training set=", ratio)

# inactives in training set: 5444

# actives in training set: 669

the ratio of inactive to active in training set= 8.137518684603886

How to interpret class balance ratios.

Ratio |

How to Interpret the Value |

|---|---|

1 |

Balanced: Roughly equal number of active and inactive. Ideal for training |

2 |

Mild imbalance: 1 active for every 2 inactives. Still manageable, but performance of minority class should be monitored. |

5 |

Severe imbalance. Model may predict majority class most of the time and ignore the minority class. |

Before applying any balancing strategy, it is important to keep the following workflow principles in mind:

Always save unbalanced train/test splits

Never balance the test set

Treat balancing as part of the model, not the data

Expect some variability when downsampling

Use fixed seeds when debugging, variable seeds when evaluating robustness

2.3 Balance the Training Set by Downsampling#

When a dataset has a strong class imbalance a common mitigation strategy is downsampling the majority class. In downsampling, we randomly select a subset of the majority class so that its size is comparable to that of the minority class. This encourages the model to learn decision boundaries that treat both classes more equitably, rather than defaulting to predictions dominated by the majority class. While downsampling reduces the total amount of training data and may discard potentially informative samples from the majority class, it often improves model performance on the minority class and reduces bias toward the majority class.

In the code cell below, we randomly select a subset of the inactive compounds such that their number matches the number of active compounds.

# Indices of each class

idx_inactives = np.where(y_train == 0)[0]

idx_actives = np.where(y_train == 1)[0]

# Number of observations in each class

num_inactives = len(idx_inactives)

num_actives = len(idx_actives)

# Downsample inactives to match number of actives

np.random.seed(0)

idx_inactives_downsampled = np.random.choice(

idx_inactives,

size=num_actives,

replace=False

)

# Create balanced training set

X_train_bal = np.vstack((

X_train[idx_inactives_downsampled],

X_train[idx_actives]

))

y_train_bal = np.hstack((

y_train[idx_inactives_downsampled],

y_train[idx_actives]

))

# Confirm balancing worked

print("# inactives:", len(y_train_bal) - y_train_bal.sum())

print("# actives: ", y_train_bal.sum())

ratio = (len(y_train_bal) - y_train_bal.sum()) / y_train_bal.sum()

print("ratio inactive to active =", ratio)

print("\nTraining set shape:")

print("X:", X_train_bal.shape)

print("y:", y_train_bal.shape)

# inactives: 669

# actives: 669

ratio inactive to active = 1.0

Training set shape:

X: (1338, 162)

y: (1338,)

The following is sample output from this dataset before and after downsampling. Your exact values may differ if a different random seed or dataset version is used.

| Training Set | # Inactives | # Actives | Inactive : Active Ratio | X Shape | y Shape |

|---|---|---|---|---|---|

| Original (Unbalanced) | 5444 | 669 | ≈ 8.1 : 1 | (6113, 162) | (6113,) |

| Balanced (Downsampled) | 669 | 669 | 1 : 1 | (1338, 162) | (1338,) |

1. Was the original set balanced or unbalanced and identify the majority and minority classes

Answer

The initial data set was highly imbalanced with 8 Inactives (majority class) for each active (minority class).

2. Explain from the data how down sampling worked

Answer

Initially there were 5,444 inactives and 669 actives in the dataset. The downsampling reduced the number of unbalanced to the number of balanced, giving 669 compounds of each class, with a total dataset of 1338 compounds.

3. Can you explain the shape of the feature matrix and label vector and what data they contain?

Answer

- The feature matrix

- Contains 162 columns representing the MACCS fingerprints after the zero-variance mask was applied.

- Each row represents a compound

- The original training se contains 6113 compounds

- The balanced (downsampled) training set contains 1338 compounds

- The label vector contains the Boolean value to indicate a compound is active (1) or inactive (0)

4. Do the balanced feature matrix (X_train_bal) or label vector

(y_train_bal) contain the chemical identity (e.g., CID) of each

compound? If not, why is this information absent, and how could the chemical

identity of a specific row be recovered if needed?

Answer

No. Neither the feature matrix (X_train_bal) nor the label vector (y_train_bal) contains explicit chemical identity information such as CIDs or SMILES strings. These NumPy arrays store only numerical data: fingerprint bits in X and binary activity labels in y.

This separation is intentional. Machine-learning models operate on numerical feature vectors and labels, not on compound identifiers. Including chemical identity directly in the feature or label arrays would mix metadata with model inputs and violate standard machine-learning design principles.

Chemical identity is instead preserved in separate artifacts created earlier in section 1.5.1 of this workflow, such as the train_cids.csv file and the corresponding index arrays (e.g., train_idx.npy). These files allow individual

rows in the NumPy arrays to be traced back to specific compounds without embedding identity information into the model’s inputs.

Explanation

This code performs down sampling using NumPy array operations rather than Pandas DataFrames. The goal is to construct a new, balanced training set from the original training data.

Identifying class membership

The calls to np.where() locate the row indices corresponding to

inactive (y = 0) and active (y = 1) compounds in the

training label vector.

idx_inactives = np.where(y_train == 0)[0]

idx_actives = np.where(y_train == 1)[0]

Random downsampling of the majority class

A subset of inactive indices is randomly selected without replacement so that the number of inactive samples matches the number of active samples.

idx_inactives_downsampled = np.random.choice(

idx_inactives,

size=num_actives,

replace=False

)

Reassembling the balanced training arrays

The balanced feature matrix and label vector are constructed by stacking the selected inactive samples together with all active samples.

X_train_bal = np.vstack((

X_train[idx_inactives_downsampled],

X_train[idx_actives]

))

y_train_bal = np.hstack((

y_train[idx_inactives_downsampled],

y_train[idx_actives]

))

The result is a balanced training dataset in which both classes are equally represented and aligned row-by-row.

Summary: Imbalanced vs. Balanced Training Data

At this point, we have two versions of the training data:

The original training set, which reflects the natural class imbalance of the dataset.

A balanced training set, created by downsampling the majority class.

Both versions are valid representations of the training data, but they serve different purposes. The imbalanced training set preserves the original data distribution, while the balanced training set emphasizes equal representation of both classes during model learning.

In the next section, we will build and evaluate a classification model using the balanced training data and compare its behavior to models trained on unbalanced data. This comparison will help illustrate how class imbalance and balancing strategies influence model predictions and performance.

3. Build a model using the training set.#

Now we are ready to build predictive models using machine learning algorithms available in the scikit-learn library (https://scikit-learn.org/). This notebook will use Naïve Bayes it is relatively fast and simple.

3.1 Naïve Bayes#

Naïve Bayes is a family of probabilistic classification algorithms that are particularly well suited for datasets where features are represented as binary indicators. In cheminformatics, this commonly occurs when molecules are encoded using molecular fingerprints, where each feature answers a yes/no question, such as whether a specific substructure is present in the molecule. Bernoulli Naïve Bayes is the variant designed specifically for this situation. It treats each fingerprint bit as a binary feature (0 or 1) and learns how often each feature appears in each class (for example, active versus inactive compounds). During prediction, the model combines this information across all features to estimate which class best matches the observed pattern of fingerprint bits.

A simplifying assumption made by Naïve Bayes is that each feature contributes independently to the classification decision. In other words, the model treats each fingerprint bit as providing its own piece of evidence, without explicitly accounting for relationships between bits. This assumption is more reasonable for some types of molecular fingerprints than others. For example, MACCS keys are based on a fixed set of predefined structural patterns, and many of these bits represent distinct chemical features. In contrast, Morgan fingerprints are generated from overlapping atomic environments, so multiple bits may be activated by the same underlying substructure. As a result, Morgan fingerprint bits tend to be more strongly correlated with one another.

In practice, the independence assumption is rarely strictly true,especially in chemistry, where molecular features are inherently related. However, Naïve Bayes often performs well despite this simplification, particularly for high-dimensional, sparse feature vectors such as molecular fingerprints. The algorithm’s simplicity can make it surprisingly robust, even when its assumptions are only approximately satisfied.

In addition to modeling how features behave within each class, Naïve Bayes also incorporates how common each class is in the training data. When the dataset is balanced, the model treats each class as equally plausible before considering any molecular features, so classification decisions are driven primarily by how well the fingerprint pattern matches each class. When the dataset is imbalanced, the model naturally favors the more common class unless the feature evidence strongly supports the alternative. This interaction between feature evidence (X) and class prevalence (y) plays an important role in how Naïve Bayes behaves and motivates strategies such as downsampling.

Please see Appendix 10.2” Bayes’s Theorem: From Inference to Models for a workup on how Naïve Bayes works.

Explanation

Bernoulli Naïve Bayes is a probabilistic model, meaning it uses probabilities to decide which class label is most consistent with the observed features. At a high level, the model answers the question: “Given the fingerprint bits for this molecule, which class is more likely, 1 (active) or 0 (inactive)?”

The core idea: updating beliefs

The model starts with a baseline expectation about each class (for example, how common active compounds are overall) and then updates that expectation based on the observed fingerprint bits. This idea is formalized by Bayes’ Theorem:

Where:

\( y \) is the class label (e.g., active or inactive)

\( X \) represents all fingerprint bits for a molecule

\( P(y \mid X) \) is the probability of class ( y ) given the observed features

In practice, the model compares this quantity across classes and selects the most likely one.

What each term means (intuitively)

\( P(y) \) — the prior

This represents how common each class is before looking at any molecular features. If most compounds are inactive, the prior probability of “inactive” is higher.

\( P(X \mid y) \) — the likelihood

This measures how well the observed fingerprint bits match what the model has learned about class ( y ). This is where the fingerprint information is used.

\( P(X) \) — the evidence

This term ensures probabilities are properly scaled. Because it is the same for all classes, it does not affect which class is chosen and is usually ignored during classification.

The “naïve” assumption: breaking features apart

Computing \( P(X \mid y) \) directly would be extremely difficult for molecular fingerprints, because many bits are related to one another. Naïve Bayes simplifies this by assuming that each fingerprint bit can be treated independently when estimating probabilities. This allows the likelihood to be written as a product:

Each fingerprint bit contributes its own small piece of evidence toward the final decision. Together, these pieces of evidence are combined to determine which class the molecule most closely resembles overall.

Why it is called Bernoulli Naïve Bayes In Bernoulli Naïve Bayes, each fingerprint bit is treated as a Bernoulli random variable, meaning it can take only two values:

Value = 1 → feature is present

Value = 0 → feature is absent

The class label \( 𝑦 \) also takes on discrete values (for example, 0 = inactive and 1 = active). During training, the model looks at all molecules in each class and learns how often each fingerprint bit is present or absent within that class. For each bit and each class, the model learns a probability such as:

The above equation is saying that, among molecules in class \(y\), how common it is for bit \(i\) to be present (\(=1\)).

During prediction:

If a bit is present, the model uses the probability of presence

If a bit is absent, the model uses the probability of absence

These probabilities are multiplied together across all bits to form an overall score for each class.

Why the math still works in practice

Even though fingerprint bits are not truly independent—especially for Morgan fingerprints—the model often performs well because:

Many weak signals combine into a strong overall pattern

Errors introduced by the independence assumption tend to cancel out

Classification depends on relative likelihoods, not exact probabilities

As a result, Naïve Bayes often provides good classification performance even when its assumptions are only approximately true.

For a more detailed explaination go to Appendix A10.2 Bayes’ Theorem: From Inference to Models

from sklearn.naive_bayes import BernoulliNB #-- Naïve Bayes

# set up the NB classification model. Bernoulli is specific for binary features (0,1)

clf_NB = BernoulliNB()

In scikit-learn examples, the prefix clf is commonly used as shorthand for classifier. In this notebook, clf_NB refers to a Bernoulli Naïve Bayes classifier object. This naming convention is descriptive only; it is not required by Python or by scikit-learn. Its purpose is to make the role of the object explicit within the machine-learning workflow.

At this point in the course, two perspectives are coming together:

The scientific/statistical model, introduced conceptually in Appendix A-10.2

The scikit-learn implementation, which represents that model as a Python object with a defined interface and behavior

The BernoulliNB class in scikit-learn is a concrete implementation of the Naïve Bayes probabilistic model described in the Appendix.

The call

clf_NB.fit(X_train_balanced, y_train_balanced)

trains the Bernoulli Naïve Bayes model using the training data. In scikit-learn terminology, fitting a model means estimating the parameters of the statistical model from the data and storing them inside the classifier object.

During training, the classifier learns how molecular feature vectors are associated with activity labels. Specifically, it estimates two categories of quantities from the training set:

Feature–class likelihoods For each feature (each column in

X_train_balanced) and for each class label iny_train_balanced, the model estimates how frequently that feature occurs among samples belonging to that class. Because Bernoulli Naïve Bayes is designed for binary features, this corresponds to learning how often each fingerprint bit is equal to 1 within each class.Class prior probabilities The model also estimates how common each class is in the training data. These class frequencies form the prior probabilities, which represent how likely each class is before considering any molecular features.

Together, these learned quantities define the decision rule used by the classifier. After training is complete, the model has all the information required to evaluate new molecular feature vectors. At this stage, the model is not yet making predictions. Instead, it is establishing and storing the statistical relationships that will later be used during evaluation and inference.

Conceptually, the trained BernoulliNB object is the computational realization of the probabilistic model described in Appendix A-10.2. Once trained, it exposes two primary interfaces:

predict_proba, which returns class probabilitiespredict, which returns discrete class assignments

These interfaces will be used explicitly in later sections when we evaluate model performance and apply the model to new data.

# Train the model by fitting it to the data.

clf_NB.fit( X_train_bal, y_train_bal)

print("Model expects features:", clf_NB.n_features_in_)

clf_NB

Model expects features: 162

BernoulliNB()In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook.

On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.

Parameters

| alpha | 1.0 | |

| force_alpha | True | |

| binarize | 0.0 | |

| fit_prior | True | |

| class_prior | None |

Explanation

After fitting the model, Jupyter displays a summary of the BernoulliNB object. This output does not show predictions or results; instead, it reports the configuration parameters that define how the model learned from the data and how it will behave during prediction. These parameters control how probabilities are estimated and how the learned decision rule is constructed.

Model type: BernoulliNB

This confirms that the classifier is designed for binary features (0 or 1), such as molecular fingerprint bits. Each feature is treated as a Bernoulli random variable indicating presence or absence.

alpha = 1.0 — smoothing parameter

This parameter controls Laplace smoothing, which prevents estimated probabilities from becoming exactly 0 or 1.

If a fingerprint bit never appears within a given class—that is, for all training samples with a particular class label ( y ), the feature value ( \(x_i = 0\) ), then without smoothing the model would assign a probability of zero to that feature for that class. During prediction, this would cause the entire class to be ruled out whenever that bit is present. Laplace smoothing instead assigns a small, nonzero probability.

This avoids numerical issues and makes the model more robust, especially when working with limited or sparse data.

This is different from the earlier feature selection step, where we removed features that did not change across the entire dataset (for example, the MACCS bit position that was always zero for all molecules).

Smoothing ensures that no single missing feature completely rules out a class.

force_alpha = True

This forces the model to always use the specified value of alpha.

Ensures consistent smoothing behavior

Mainly an internal safety setting

For most users, this parameter does not need to be changed.

binarize = 0.0

This parameter controls whether input features are automatically converted to binary values.

Values greater than 0.0 → 1

Values less than or equal to 0.0 → 0

In this workflow:

Molecular fingerprints are already binary

This parameter effectively has no impact

fit_prior = True

This tells the model to learn class priors from the training labels (y_train).

If the training data are balanced, the learned priors will be equal

If the training data are imbalanced, the learned priors will reflect that imbalance, giving more weight to the more common class.

As a result, when fit_prior=True, class frequency influences how strongly the model favors one class over another. In an imbalanced dataset, the model will require stronger feature evidence to predict the minority class. Setting fit_prior=False forces the model to treat all classes as equally likely, which may be useful in some cases but can lead to unreliable probability estimates when the training data are highly imbalanced, and so the default is true.

class_prior = None

This indicates that no manual class priors were provided.

Because

fit_prior = Trueandclass_prior = None, the model estimates class priors automatically from the training labels (y_train).In other words, the frequency of each class in the training data determines how strongly the model expects each class before considering any features.

It is also possible to override this behavior by supplying class priors explicitly when the model is created (for example, to force equal weighting of classes). However, in this lesson we allow the model to infer priors from the training data so that the connection between data preparation (such as downsampling) and model behavior remains transparent.

Big-picture takeaway

Together, these parameters define how Bernoulli Naïve Bayes:

Estimates feature probabilities within each class

Incorporates class balance through priors

Constructs a decision rule that maps feature vectors X to class labels y

The model has now learned this decision rule internally. In the next step, we will apply it to new data to see how well it generalizes beyond the training set.

3.2 From Training to Inference: What does the Classifier Produce?#

Model evaluation depends on what type of output the classifier produces: class labels or probabilities.

Once a classifier has been trained, it can produce two different kinds of outputs, depending on which method is used. Understanding this distinction is essential, because different evaluation metrics require different types of outputs.

The method .predict() returns discrete class labels. For a binary classifier, these are integers such as 0 (inactive) or 1 (active). Calling .predict() answers the question: “Which class does the model assign to each compound?” These class labels are used to construct confusion matrices and to compute metrics such as accuracy, precision, recall, and F1-score.

In contrast, .predict_proba() returns probabilistic scores. For each compound, the model reports its estimated probability of belonging to each class. For binary classification, this means a pair of values that sum to 1. These probabilities answer a different question: “How confident is the model in its prediction?” Probabilistic outputs are required for threshold-independent metrics such as ROC curves and ROC–AUC.

The difference can be seen directly by running the following code and we will go over these in the next two sections

# Discrete class predictions (0 or 1)

y_pred = clf_NB.predict(X_test)

# Probabilistic predictions (confidence scores)

y_proba = clf_NB.predict_proba(X_test)

y_pred[:5], y_proba[:5]

(array([0, 0, 1, 0, 0]),

array([[9.99990160e-01, 9.83971842e-06],

[5.73893051e-01, 4.26106949e-01],

[6.58510978e-03, 9.93414890e-01],

[9.48421570e-01, 5.15784297e-02],

[8.86150183e-01, 1.13849817e-01]]))

Explanation

The scikit-learn library follows the X/y

conventions consistently when fitting models and generating predictions.

- Models are trained using

model.fit(X, y) Xis treated as a feature matrixyis treated as a target vector

For probabilistic classifiers such as Naïve Bayes, prediction occurs in two conceptual steps:

X → P(y | X) → y_hat

-

X— the feature matrix- Numerical representation of the compounds

- Each row corresponds to one compound

- Each column corresponds to one descriptor or fingerprint bit

-

P(y | X)— the posterior class probabilities- The model’s estimated probability for each class

- Computed internally using Bayes’ theorem

- Returned by

model.predict_proba(X)

-

y_hat— the predicted class label- The single class assigned to each compound

- Chosen as the class with the highest probability

- Returned by

model.predict(X)

In short, predict_proba() computes probabilities, while

predict() applies a decision rule based on those probabilities.

Even when Pandas DataFrames or Series are supplied, scikit-learn converts them

internally to NumPy arrays, preserving the matrix–vector structure

implied by X and y.

Explanation

The scikit-learn library follows the X/y

conventions consistently when fitting models and generating predictions.

- Models are trained using

model.fit(X, y) Xis treated as a feature matrixyis treated as a target vector

For probabilistic classifiers such as Naive Bayes, prediction occurs in two conceptual steps:

\( \mathbf{X} \;\rightarrow\; P(y \mid \mathbf{X}) \;\rightarrow\; \hat{y} \)

-

X— the feature matrix- Numerical representation of the compounds

- Each row = one compound

- Each column = one descriptor or fingerprint bit

-

\( P(y \mid X) \): the posterior probability

- The model’s estimated probability of each class

- Computed using Bayes’ theorem

- Returned by

model.predict_proba(X)

-

\( \hat{y} \): the predicted class label

- The single class chosen for each compound

- Selected as the class with the highest probability

- Returned by

model.predict(X)

Here, predict_proba() computes probabilities, while

predict() applies a decision rule based on those probabilities.

Even when Pandas DataFrames or Series are used, scikit-learn converts them

internally to NumPy arrays, preserving the matrix–vector structure

implied by X and y.

Explanation

The scikit-learn library follows the X/y

conventions consistently when fitting models and generating predictions.

- Models are trained using

model.fit(X, y) Xis treated as a feature matrixyis treated as a target vector

For probabilistic classifiers such as Naive Bayes, prediction occurs in two conceptual steps:

\( \mathbf{X} \;\rightarrow\; P(y \mid \mathbf{X}) \;\rightarrow\; \hat{y} \)

-

X— the feature matrix- Numerical representation of the compounds

- Each row = one compound

- Each column = one descriptor or fingerprint bit

-

\( P(y \mid X) \): the posterior probability

- The model’s estimated probability of each class

- Computed using Bayes’ theorem

- Returned by

model.predict_proba(X)

-

\( \hat{y} \): the predicted class label

- The single class chosen for each compound

- Selected as the class with the highest probability

- Returned by

model.predict(X)

Here, predict_proba() computes probabilities, while

predict() applies a decision rule based on those probabilities.

Even when Pandas DataFrames or Series are used, scikit-learn converts them

internally to NumPy arrays, preserving the matrix–vector structure

implied by X and y.

4. Classification-Based Inference (.predict)#

With a trained classifier in hand, we now turn to its use for classification-based inference. In this mode, the model assigns each compound to a discrete class, such as inactive (0) or active (1), based on the learned decision rule. Evaluating the model at this stage means examining how these class assignments compare to known reference labels and analyzing the types of classification errors that occur.

In scikit-learn, classification evaluation is performed by comparing two aligned arrays:

the known class labels for a dataset, often referred to conceptually as “ground truth” (

y_train_balory_test)the class labels predicted by the model using

.predict()(y_pred)

Rather than introducing a separate variable such as y_true, this notebook consistently uses the existing dataset labels (y_train_bal or y_test) to make it explicit which dataset is being evaluated and to reinforce the distinction between training and test data. This section focuses on classification outcomes and begins with the confusion matrix, which provides a complete summary of how predicted class labels compare to known labels. Summary metrics derived from the confusion matrix are introduced afterward.

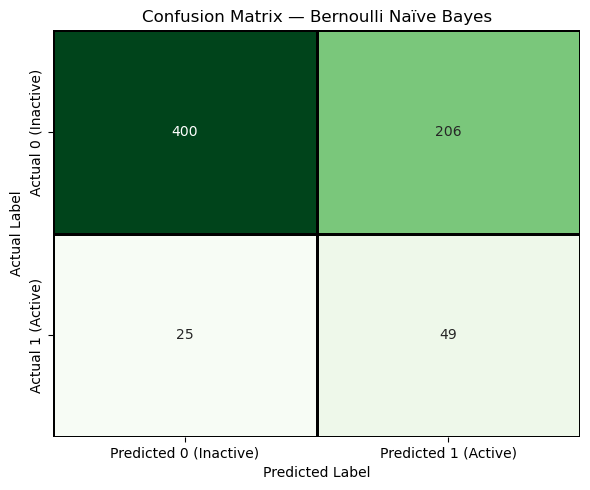

4.1 Confusion Matrix for Classification Evaluation#

To evaluate how well the trained Bernoulli Naïve Bayes model classifies compounds, we compare the model’s predicted class labels to the known reference labels using a confusion matrix. A confusion matrix always compares two one-dimensional arrays:

the reference labels for a dataset (

y_train_balory_test)the predicted class labels produced by the model for the same samples (

y_pred)

In this section, evaluation is performed using the test set, which provides an unbiased estimate of classification performance on unseen data. The known labels are therefore y_test, and the predicted labels (y_pred) are generated by applying the trained model to X_test.

The resulting confusion matrix organizes predictions into four categories: true positives, true negatives, false positives, and false negatives. These counts form the foundation for many commonly used classification metrics and provide direct insight into the types of errors the model makes.

4.2 Load Feature Arrays and Generate the Confusion Matrix#

# Imports and paths

from pathlib import Path

import numpy as np

SPLIT_ROOT = Path("data/AID743139/splits/90_10/arrays")

# Load saved feature arrays and labels

X_train = np.load(SPLIT_ROOT / "X_train.npy")

X_test = np.load(SPLIT_ROOT / "X_test.npy")

y_train = np.load(SPLIT_ROOT / "y_train.npy")

y_test = np.load(SPLIT_ROOT / "y_test.npy")

# Balance the training set (downsample inactives)

idx_inactives = np.where(y_train == 0)[0]

idx_actives = np.where(y_train == 1)[0]

np.random.seed(0)

idx_inactives_down = np.random.choice(

idx_inactives,

size=len(idx_actives),

replace=False

)

X_train_bal = np.vstack((

X_train[idx_inactives_down],

X_train[idx_actives]

))

y_train_bal = np.hstack((

y_train[idx_inactives_down],

y_train[idx_actives]

))

# Train the classifier

from sklearn.naive_bayes import BernoulliNB

from sklearn.metrics import confusion_matrix, classification_report

clf_NB = BernoulliNB()

clf_NB.fit(X_train_bal, y_train_bal)

# Generate test-set predictions

y_test_pred = clf_NB.predict(X_test)

# Confusion matrix

cm = confusion_matrix(y_test, y_test_pred)

TN, FP, FN, TP = cm.ravel()

print("Test-set Confusion Matrix")

print(cm)

# Classification report

#|print("\nTest-set Classification Report")

#print(classification_report(y_test, y_test_pred))

Test-set Confusion Matrix

[[400 206]

[ 25 49]]

These two lines perform the core evaluation step.

y_pred = clf_NB.predict(X_test)

cm = confusion_matrix(y_test, y_pred)

print(cm) # [[TN, FP],

# [FN, TP]]

# Extracting TN, FP, FN, TP from the confusion matrix

TN = cm[0, 0] # True Negatives

FP = cm[0, 1] # False Positives

FN = cm[1, 0] # False Negatives

TP = cm[1, 1] # True Positives

print("True Negatives (TN):", TN)

print("False Positives (FP):", FP)

print("False Negatives (FN):", FN)

print("True Positives (TP):", TP)

print("Total predictions:", TN + FP + FN + TP)

[[400 206]

[ 25 49]]

True Negatives (TN): 400

False Positives (FP): 206

False Negatives (FN): 25

True Positives (TP): 49

Total predictions: 680

# -------------------------------------------------

# Result persistence setup

# -------------------------------------------------

from pathlib import Path

import json

import numpy as np

import pandas as pd

from datetime import date

RESULTS_ROOT = Path("results/AID743139/nb")

RESULTS_ROOT.mkdir(parents=True, exist_ok=True)

print("Results will be saved to:")

print(RESULTS_ROOT.resolve())

# -------------------------------------------------

# Save test-set predictions

# -------------------------------------------------

# y_test : known labels (ground truth)

# y_test_pred : model predictions

df_test_pred = pd.DataFrame({

"y_true": y_test, # y_true : reference labels for evaluation (here: y_test)

"y_pred": y_test_pred # y_pred : labels predicted by the model

})

pred_path = RESULTS_ROOT / "test_predictions.csv"

df_test_pred.to_csv(pred_path, index=False)

print(f"Saved test-set predictions to {pred_path.name}")

df_test_pred.head()

Results will be saved to:

/home/rebelford/jupyterbooks/cinf26book/content/modules/10_SupervisedML/results/AID743139/nb

Saved test-set predictions to test_predictions.csv

| y_true | y_pred | |

|---|---|---|

| 0 | 0 | 0 |

| 1 | 0 | 0 |

| 2 | 0 | 1 |

| 3 | 0 | 0 |

| 4 | 0 | 0 |

4.3 Saving Confusion Matrices and Metrics#

# -------------------------------------------------

# Save confusion matrix

# -------------------------------------------------

cm_path = RESULTS_ROOT / "confusion_matrix_test.npy"

np.save(cm_path, cm)

print(f"Saved confusion matrix to {cm_path}")

print("Confusion matrix contents:")

print(cm)

Saved confusion matrix to results/AID743139/nb/confusion_matrix_test.npy

Confusion matrix contents:

[[400 206]

[ 25 49]]

4.3.1 Recording Experimental Metadata#

# -------------------------------------------------

# Save evaluation metrics and metadata

# -------------------------------------------------

from sklearn.metrics import accuracy_score

metrics = {

"accuracy": accuracy_score(y_test, y_test_pred),

"TN": int(TN),

"FP": int(FP),

"FN": int(FN),

"TP": int(TP)

}

metrics_path = RESULTS_ROOT / "metrics_test.json"

with open(metrics_path, "w") as f:

json.dump(metrics, f, indent=2)

metadata = {

"assay": "AID743139",

"model": "BernoulliNB",

"features": "MACCS",

"split": "90_10",

"training_balanced": True,

"evaluation_set": "test",

"date": date.today().isoformat(),

"notebook": "10.2_naive_bayes.ipynb"

}

metadata_path = RESULTS_ROOT / "results_metadata.json"

with open(metadata_path, "w") as f:

json.dump(metadata, f, indent=2)

print("Saved metrics and metadata:")

print(f" - {metrics_path}")

print(f" - {metadata_path}")

Saved metrics and metadata:

- results/AID743139/nb/metrics_test.json

- results/AID743139/nb/results_metadata.json

4.4 Interpreting the Confusion Matrix#

There is no single number that fully describes how a classifier performs. Instead of immediately collapsing model behavior into a summary statistic, we begin by examining the confusion matrix, which records how predictions are distributed across correct and incorrect outcomes. By separating true positives, false positives, true negatives, and false negatives, the confusion matrix makes explicit which types of mistakes the model makes and how often they occur. This structured view provides the foundation for all subsequent evaluation metrics.

Historically, the confusion matrix is so named because it explicitly shows where a model “confuses” one class for another, rather than hiding those errors inside a single summary value. Simply speaking, a False positive was when the model thought an inactive compound was active, and a false negative is when the model predicts an active compound is inactive.

In the sections that follow, we will use the confusion matrix to derive quantitative evaluation measures. Each metric is computed directly from these counts, and their meaning is best understood only after the structure of the confusion matrix is clear.

In this class we will use the scikit-learn convention above, where labels are sorted \([0,1]\) and align with binary encoding 0 (inactive) and 1 (active).

| Actual | Predicted | |

|---|---|---|

| 0 | 1 | |

| 0 | TN True Negative |

FP False Positive |

| 1 | FN False Negative |

TP True Positive |

You need to be aware that there is an alternate convention that starts with the positive \([1,0]\) type ordering.

Explanation

Many sources choose to place the positive first and negative second, essentially reversing the boolean relationships, as shown in the following confusion matrix.| Actual | Predicted | |

|---|---|---|

| 1 | 0 | |

| 1 | TP True Positive |

FN False Negative |

| 0 | FP False Positive |

TN True Negative |

We will not use this convention in this class, but when comparing data you need to be aware of which convention is being used.

import seaborn as sns

import matplotlib.pyplot as plt

import numpy as np

plt.figure(figsize=(6, 5))

sns.heatmap(

cm,

annot=True,

fmt="d",

cmap="Greens",

cbar=False,

xticklabels=["Predicted 0 (Inactive)", "Predicted 1 (Active)"],

yticklabels=["Actual 0 (Inactive)", "Actual 1 (Active)"],

linewidths=1,

linecolor="black"

)

plt.title("Confusion Matrix — Bernoulli Naïve Bayes")

plt.xlabel("Predicted Label")

plt.ylabel("Actual Label")

plt.tight_layout()

plt.show()

The diagonal cells represent correct predictions, while the off-diagonal cells represent errors. The confusion matrix makes it visually clear that different types of errors occur, which is why we need multiple evaluation metrics rather than a single accuracy value

4.5 Confusion Matrix Based Evaluation Metrics#

The confusion matrix reveals which kinds of mistakes the model makes.

Predicted Inactive |

Predicted Active |

|

|---|---|---|

Actual Inactive |

TN (True Negative) |

FP (False Positive) |

Actual Active |

FN (False Negative) |

TP (True Positive) |

4.5.1 Table of Confusion Matrix Metrics#

All the metrics below are derived from TN, TP, FN & FP and summarize the model’s validity (mistakes) from different scientific perspectives.

Metric |

Mathematical Definition |

What This Metric Tells Us |

|---|---|---|

Accuracy |

\(\displaystyle \frac{TP + TN}{TP + TN + FP + FN}\) |

What fraction of all predictions—active and inactive—were correct overall. |

Precision |

\(\displaystyle \frac{TP}{TP + FP}\) |

When the model predicts “active,” how often is it actually correct? |

Sensitivity (Recall) |

\(\displaystyle \frac{TP}{TP + FN}\) |

Of all truly active compounds, how many did the model successfully identify? |

Specificity |

\(\displaystyle \frac{TN}{TN + FP}\) |

Of all truly inactive compounds, how many did the model correctly reject? |

Balanced Accuracy |

\(\displaystyle \frac{\text{Sensitivity} + \text{Specificity}}{2}\) |

How well the model performs across both classes, even when the dataset is imbalanced. |

F1 Score |

\(\displaystyle \frac{2,(\text{Precision}\times\text{Sensitivity})}{\text{Precision} + \text{Sensitivity}}\) |

A single score that balances finding actives with avoiding false positives. |

# --- Test metrics ---

acc_test = accuracy_score(y_test, y_test_pred)

prec_test = TP / (TP + FP) if (TP + FP) else 0.0

sens_test = TP / (TP + FN) if (TP + FN) else 0.0

spec_test = TN / (TN + FP) if (TN + FP) else 0.0

bacc_test = (sens_test + spec_test) / 2

f1_test = (2 * prec_test * sens_test / (prec_test + sens_test)) if (prec_test + sens_test) else 0.0

print("TEST SET PERFORMANCE")

print(f"Accuracy = {acc_test:.4f}")

print(f"Precision = {prec_test:.4f}")

print(f"Sensitivity = {sens_test:.4f}")

print(f"Specificity = {spec_test:.4f}")

print(f"Balanced Accuracy = {bacc_test:.4f}")

print(f"F1 Score = {f1_test:.4f}")

TEST SET PERFORMANCE

Accuracy = 0.6603

Precision = 0.1922

Sensitivity = 0.6622

Specificity = 0.6601

Balanced Accuracy = 0.6611

F1 Score = 0.2979